You are using an out of date browser. It may not display this or other websites correctly.

You should upgrade or use an alternative browser.

You should upgrade or use an alternative browser.

Ram Prices Projected To Plummet

- Thread starter BloodOmen

- Start date

- Joined

- Dec 11, 1997

- Messages

- 9,077,611

As much as I want this to happen surely it will require software companies to adopt this new code to make use of the algorithm? Or is it some form of hypervisor memory management process which can also compress memory (has been done before).

BloodOmen

I am a FH squatter

- Joined

- Jan 27, 2004

- Messages

- 18,678

- Thread starter

- #3

As much as I want this to happen surely it will require software companies to adopt this new code to make use of the algorithm? Or is it some form of hypervisor memory management process which can also compress memory (has been done before).

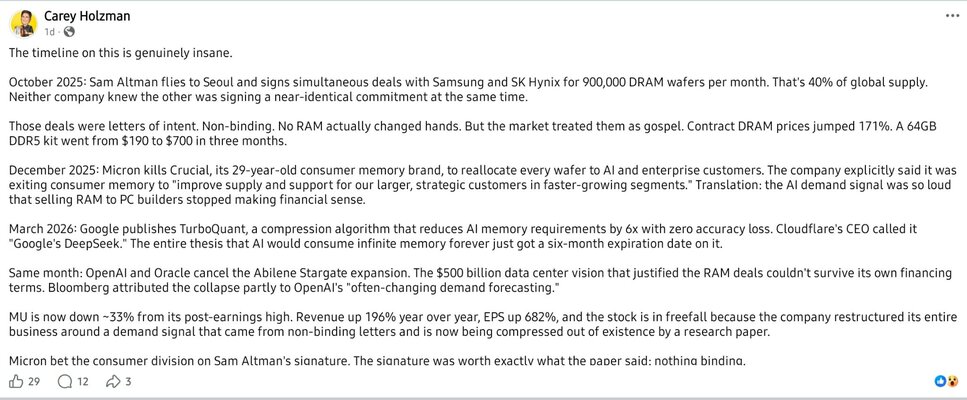

The magic AI crystal ball says this about it

What TurboQuant is

- A new AI memory compression algorithm from Google

- Designed to reduce how much RAM / VRAM AI models need

- Focuses on something called the “key-value cache” (basically the short-term memory AI uses when generating responses)

What it actually does

- Cuts memory usage by ~6× (six times less RAM needed)

- Maintains accuracy (no noticeable loss in performance)

- Can even speed things up in some cases

👉 Same AI capability, but with way less memory required.

BloodOmen

I am a FH squatter

- Joined

- Jan 27, 2004

- Messages

- 18,678

- Thread starter

- #5

Six times less RAM needed, or is AI going to be six times better?

I think I'll wait for the bubble to burst then there will be lots of very cheap RAM.

If only, the bubble isn't far off bursting I reckon. Even Disney can see the bubble's about to go, they pulled $1,000,000,000 of investment from Sora/ChatGPT - other big investors have also pulled out or reduced their investment by a lot. I think investors are starting to realise that they won't see returns for a long time yet because datacentres haven't even been built yet and are likely still 5+ years from being built (if at all due to regulations and pushback)

- Joined

- Dec 11, 1997

- Messages

- 9,077,611

Key-Value caches have been around for years, FreddysHouse runs one and has done for a very long time. That is not new tech, go check Redis - The Real-time Data PlatformThe magic AI crystal ball says this about it

What TurboQuant is

- A new AI memory compression algorithm from Google

- Designed to reduce how much RAM / VRAM AI models need

- Focuses on something called the “key-value cache” (basically the short-term memory AI uses when generating responses)

What it actually does

In simple terms:

- Cuts memory usage by ~6× (six times less RAM needed)

- Maintains accuracy (no noticeable loss in performance)

- Can even speed things up in some cases

👉 Same AI capability, but with way less memory required.

Users who are viewing this thread

Total: 2 (members: 0, guests: 2)